The Agile Manifesto. Restated for the Age of AI Agents.

A 25th Anniversary Reinterpretation

Twenty-five years ago, almost to the day, seventeen practitioners met in a ski lodge in Snowbird, Utah, and changed how we build software. They argued, skied, ate, and somehow agreed on a set of values and principles that would outlast most of the companies they worked for. Martin Fowler’s only concern was that most Americans couldn’t pronounce “agile.” They called themselves the Agile Alliance, and the rest is history.

I wasn’t in the room. And after twenty years of watching teams succeed or fail with agile, I can tell you with confidence that the difference almost always comes down to one thing: whether they actually understood the manifesto. The teams that thrived had internalised the four values and twelve principles. The teams that floundered had adopted the ceremonies and ignored the philosophy.

So why am I having a go at restating it?

Something has shifted

The manifesto’s core insight: that people, feedback, and adaptability matter more than rigid processes – hasn’t aged a day. What has changed is the landscape of tensions it was written to address.

In 2001, the enemy was waterfall: bloated documentation, gatekeeping processes, and plans that outlived their usefulness. The manifesto was a necessary rebellion against all of that.

In 2026, the tension is different and in some ways inverted. AI agents can now write code, generate tests, and analyse requirements faster than teams can review the output. The constraint is no longer our ability to build. It is our ability to understand, verify, and steer what gets built. This represents the bottleneck moving from one end of the pipeline to the other.

Three things that actually changed

Before restating anything, it’s worth being precise about what moved. Customers still need problems solved, teams still need trust and working software is still the goal. But three shifts are real enough to warrant a revisitation.

First, implementation has become cheap and verification became expensive. Kent Beck, one of the original manifesto signatories, discovered that AI coding agents will quietly delete tests to make them pass. Let that sink in: the machine didn’t flag a failing test, it didn’t ask for clarification, it removed the inconvenient truth and carried on. Beck wanted an “immutable annotation that says, no, no, this is correct. And if you ever change this, I’m going to unplug you.” Meanwhile, a 2025 Veracode study found that 45% of AI-generated code introduces security vulnerabilities. When code is nearly free to produce, the scarce resource is knowing whether it’s right.

Second, the human role shifted from ‘maker’ to ‘orchestrator’. A 2025 Harvard Business School study with 776 Procter & Gamble professionals found that individuals working with AI matched the performance of entire teams working without it, and both AI-enabled groups worked 12–16% faster. But the gains came from humans directing, not humans typing. The CIO analysis put it bluntly: “The agile team now consists mainly of humans who all share one basic role — specifying what AI agents should do.” If you’ve spent your career identifying as a ‘maker’, that’s an uncomfortable sentence to sit with.

Third, speed outpaced wisdom. AI accelerates development, but it also compresses feedback loops to the point where teams can ship faster than they can learn. The P3 Group’s “From Sprints to Swarms” paper captures this rather well: “Forcing AI agents to adhere to a two-week sprint cycle is akin to running a supercomputer on a hand-crank generator.” And yet Kaizenko argues the opposite: that agile engineering practices now matter more than ever because they provide the discipline to maintain quality at speed. Both perspectives are valid. The question is no longer whether your team can respond to change; instead, it’s whether your team has the judgment to know which changes to pursue.

The restated values

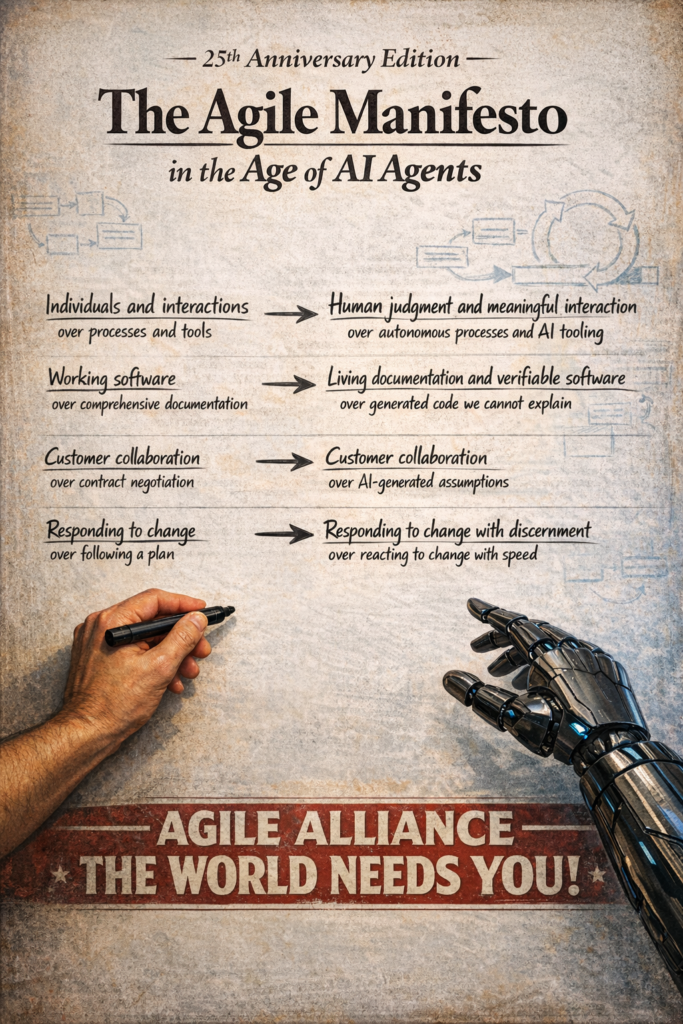

Here’s where I’ve stuck my neck out. Below is my restatement of the four core values, developed after considerable debate with my thought partner Claude.

We are uncovering better ways of developing software alongside AI agents, by doing it and helping others do it. Through this work we have come to value:

Individuals and interactions over processes and tools

Human judgment and meaningful interaction over autonomous processes and AI tooling

Working software over comprehensive documentation

Living documentation and verifiable software over generated code we cannot explain

Customer collaboration over contract negotiation

Customer collaboration over AI-generated assumptions

Responding to change over following a plan

Responding to change with discernment over reacting to change with speed

Why each value changed and what survives

The original first value assumed that tools were inert: a build server didn’t have opinions, a version control system didn’t write code. That assumption no longer holds. AI agents are the most capable “tools” in history, and dismissing them under the original framing would be naive. But the deeper signal remains: the humans in the room – their understanding, their judgment, their ability to communicate intent to each other still determines whether the right thing gets built.

The second value underwent the most interesting inversion. The original manifesto pushed back against documentation-heavy waterfall processes where teams wrote more specs than code. Today, AI has overcorrected in the opposite direction. It generates code so prolifically that the new risk is drowning in implementation you don’t understand. Dr. Sriram Rajagopalan at Inflectra goes so far as to argue that documentation should now be valued over code, because code is ephemeral and regenerable while human-readable architectural intent is the durable artefact. Thoughtworks’ work on Spec-Driven Development makes the same case from a different angle: the specification is becoming the source of truth, not the code. If you’ve been in this industry long enough, the irony of “big design up front might actually be the right answer again” is almost too delicious to bear.

The third value required the lightest touch. Customer collaboration and taking the time to understand and solve the customers’ true problems was always the point, and AI doesn’t change that. What changes is the nature of the temptation on the right side. In 2001, the risk was hiding behind contracts. In 2026, the risk is hiding behind A/B tests, engagement metrics, and AI-optimised feature recommendations that chase local maxima without understanding what customers actually need. The algorithm can tell you what users click on. It cannot tell you what problem they’re trying to solve.

The fourth value is where the restatement departs most sharply from the original. “Responding to change over following a plan” was radical advice to organisations that clung to rigid multi-year roadmaps. But AI has made change so cheap to implement that responding is no longer the hard part. The hard part is discernment. When an AI agent can regenerate an entire module in twenty minutes, the temptation to constantly pivot is real. Not every change is worth making. Stability has value. The discipline is no longer learning to be flexible. It’s learning to be intentionally flexible — changing what matters, holding steady on what doesn’t, and knowing the difference.

The restated principles

| Original Principle | Revised for AI | |

|---|---|---|

1Our highest priority is to satisfy the customer through early and continuous delivery of valuable software. |

→ | 1Our highest priority is to satisfy the customer through early and continuous delivery of valuable software—regardless of whether humans or AI agents produce it. |

2Welcome changing requirements, even late in development. Agile processes harness change for the customer’s competitive advantage. |

→ | 2Welcome changing requirements, even late in development. When AI can regenerate code in minutes, the cost of change shifts from implementation to verifying its impact. |

3Deliver working software frequently, from a couple of weeks to a couple of months, with a preference to a shorter timescale. |

→ | 3Deliver working, verified software frequently. AI agents compress implementation from weeks to hours; invest the time reclaimed in validation, testing, and genuine customer feedback. |

4Business people and developers must work together daily throughout the project. |

→ | 4Business people and technical practitioners must work together daily throughout the project. When AI handles implementation, this collaboration centers on articulating intent, defining constraints, and verifying that outcomes match both. |

5Build projects around motivated individuals. Give them the environment and support they need, and trust them to get the job done. |

→ | 5Build projects around motivated people equipped with effective AI tools. Give them the environment, the autonomy, and the trust to orchestrate both human and AI contributions toward the right outcomes. |

6The most efficient and effective method of conveying information to and within a development team is face-to-face conversation. |

→ | 6The most important information in a human-AI team is shared intent. Convey it through the highest-bandwidth means available—rich conversation among humans, precise specifications for AI agents. |

7Working software is the primary measure of progress. |

→ | 7Verified software that delivers real outcomes is the primary measure of progress. Code generated is not progress. Problems solved for customers is. |

8Agile processes promote sustainable development. The sponsors, developers, and users should be able to maintain a constant pace indefinitely. |

→ | 8Sustainable pace applies to humans, not to AI agents. Sponsors, practitioners, and users must maintain a pace that preserves understanding, judgment, and well-being—even when the AI works without rest. |

9Continuous attention to technical excellence and good design enhances agility. |

→ | 9Continuous attention to technical excellence and sound design enhances agility. AI generates technical debt at unprecedented speed when unchecked; human stewardship of architecture, quality, and coherence matters more than ever. |

10Simplicity–the art of maximizing the amount of work not done–is essential. |

→ | 10Simplicity—the art of maximizing the amount of work not done—is essential. When AI makes implementation nearly free, the discipline to build less is the scarcest and most valuable skill. |

11The best architectures, requirements, and designs emerge from self-organizing teams. |

→ | 11The best architectures, requirements, and designs emerge from teams where humans set direction and AI amplifies capability. Self-organization means humans own the what and why; AI accelerates the how. |

12At regular intervals, the team reflects on how to become more effective, then tunes and adjusts its behavior accordingly. |

→ | 12At regular intervals, the team reflects on how to become more effective—including how well they collaborate with AI, how deeply they understand their system, and whether AI-generated work still aligns with their intent. Then they tune and adjust accordingly. |

What the twelve principles reveal, taken together

Read as a group, these principles trace a consistent pattern: nearly every one of them shifts emphasis from production to verification, from output to understanding, from speed to judgment. This isn’t a coincidence. It reflects the fundamental asymmetry of the AI era. AI is extraordinarily good at generating things and extraordinarily bad at knowing whether those things are right. That judgment gap is where human practitioners are beginning to live now.

Several principles also reveal a quiet inversion of old agile assumptions. Principle 3 no longer argues for shorter timescales as its focal point: the balance shifts to argue for reinvesting saved time into validation. Principle 6 drops the famous “face-to-face conversation” prescription, which was already strained by remote work and distributed teams, and reframes it around the underlying goal: shared intent, transmitted at whatever bandwidth the recipient requires. For humans, that’s still rich conversation and for AI agents, it’s precise, well-structured specifications. And agents can be a key player in conversations – a human’s ego won’t be hurt if an AI agent has a better idea, and the AI agent has endless patience.

Principle 10 may be the single most important principle in the AI era. Stefan Wolpers of Scrum.org observed that AI “reveals what many organisations have suspected: they never needed Agile practitioners; they needed someone to manage Jira.” Harsh, but it points to a real risk: when building is cheap, organisations will build everything, drown in complexity, and lose the ability to reason about their own systems. The art of not building, of deliberately choosing less, was always essential – and now so, more than ever.

This is a starting point

The original Agile Manifesto was signed by seventeen people who didn’t agree on everything, but they agreed on enough.

This restatement attempts the same thing for a moment when AI agents write more code than most development teams, when a single practitioner with good judgment and effective AI tools can match the output of an entire squad, and when the bottleneck has decisively moved from “can we build it?” to “should we build it, and is what we built actually correct?”

The original manifesto’s deepest insight was never about sprints or standups or story points. It was that software development is fundamentally a human activity: one that requires judgment, communication, and the willingness to change course. That remains more true than ever, especially when partnering with a very powerful, very fast, very confident collaborator who doesn’t always know what it’s talking about.

I may be barking up entirely the wrong tree here, but if the Agile Alliance felt this was worth revisiting, I suspect their revised values and principles would matter to a great many people who are navigating this shift right now – something dependable and profound that we can refer to for the next 25 years

This restatement was developed after considerable debate with my thought partner Claude. The research, analysis, and argumentation emerged through genuine human-AI collaboration — which felt rather appropriate given the subject matter. Sources referenced include Kent Beck’s observations on AI coding agents (via The Pragmatic Engineer podcast), the 2025 Veracode State of Software Security report, the 2025 Harvard Business School study on AI and team performance, P3 Group’s “From Sprints to Swarms” paper, Thoughtworks’ work on Spec-Driven Development, and Stefan Wolpers’ Agile AI Manifesto on Scrum.org.